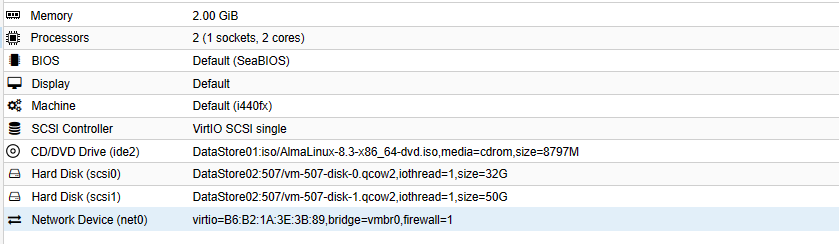

Node-hadoop07 (DataNode)

- Lan Public : 192.168.1.57/24

- Lan Privé : 172.16.185.57/24

Mise à jour

[root@node-hadoop07 ~]# yum update -y

Désactivation SELinux

[root@node-hadoop07 ~]# setenforce 0 [root@node-hadoop07 ~]# sed -i 's/SELINUX=enforcing/SELINUX=disabled/g' /etc/selinux/config

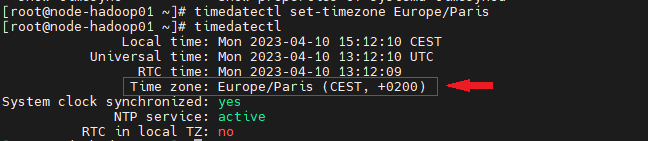

Set Timedate Paris

[root@node-hadoop07 ~]# timedatectl set-timezone Europe/Paris

Installation Prérequis

[root@node-hadoop07 ~]# dnf install wget tar sshpass vim –y

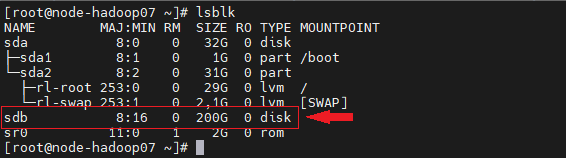

Création HDFS Disque Node07

[root@node-hadoop07 ~]# lsblk

Préparation du disque

[root@node-hadoop07 ~]# parted -s /dev/sdb mklabel msdos [root@node-hadoop07 ~]# parted -s /dev/sdb mkpart primary 1MiB 100%

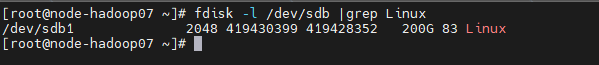

[root@node-hadoop07 ~]# fdisk -l /dev/sdb |grep Linux

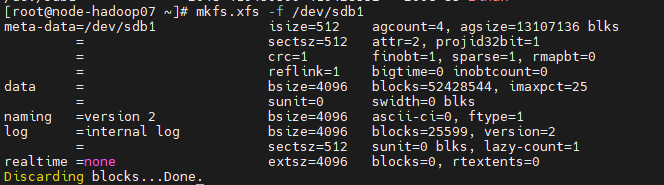

Formatage Disque

[root@node-hadoop07 ~]# mkfs.xfs -f /dev/sdb1

Comptes et Structures

Création point de montage

[root@node-hadoop07 ~]# mkdir /hadoop_dir [root@node-hadoop07 ~]# echo "/dev/sdb1 /hadoop_dir xfs defaults 0 0" >> /etc/fstab [root@node-hadoop07 ~]# mount /hadoop_dir

Création structures HDFS

[root@node-hadoop07 ~]# mkdir -p /hadoop_dir/hdfs/datanode [root@node-hadoop07 ~]# chown hduser:hadoop -R /hadoop_dir

Création User/group hadoop

[root@node-hadoop07 ~]# groupadd hadoop [root@node-hadoop07 ~]# useradd hduser [root@node-hadoop07 ~]# passwd hduser [root@node-hadoop07 ~]# usermod -G hadoop hduser

Création sshpass hduser

[root@node-hadoop07 ~]# su – hduser [hduser@node-hadoop07 ~]$ ssh-keygen [hduser@node-hadoop07 ~]$ echo "Mot_de_pass_hduser" > /home/hduser/.hduser [hduser@node-hadoop07 ~]$ chmod 600 /home/hduser/.hduser

[root@node-hadoop07 ~]# su – [root@node-hadoop07 ~]# echo "Mot_de_pass_root" >> /root/.passr [root@node-hadoop07 ~]# chmod 600 /root/.passr

Ajout des Hosts du Cluster Privé et Public

Copie fichier source /etc/hosts de NameNode vers node07

[root@node-hadoop07 ~]# sshpass -f /root/.passr scp root@192.168.1.50:/etc/hosts /etc/hosts

Ajout node07 sur /etc/hosts

[root@node-hadoop07 ~]# echo "# New Node07" >> /etc/hosts [root@node-hadoop07 ~]# echo "172.16.185.57 node-hadoop07" >> /etc/hosts [root@node-hadoop07 ~]# echo "192.168.1.57 hadoop07" >> /etc/hosts

Déploiement du nouveau fichier /etc/hosts sur les nodes du Cluster

[root@node-hadoop07 ~]# for ssh in `cat /etc/hosts |grep node |awk '{print $2}'|grep -v $HOSTNAME`;do sshpass -f /root/.passr scp -o StrictHostKeyChecking=no /etc/hosts root@${ssh}:/etc/hosts ; done

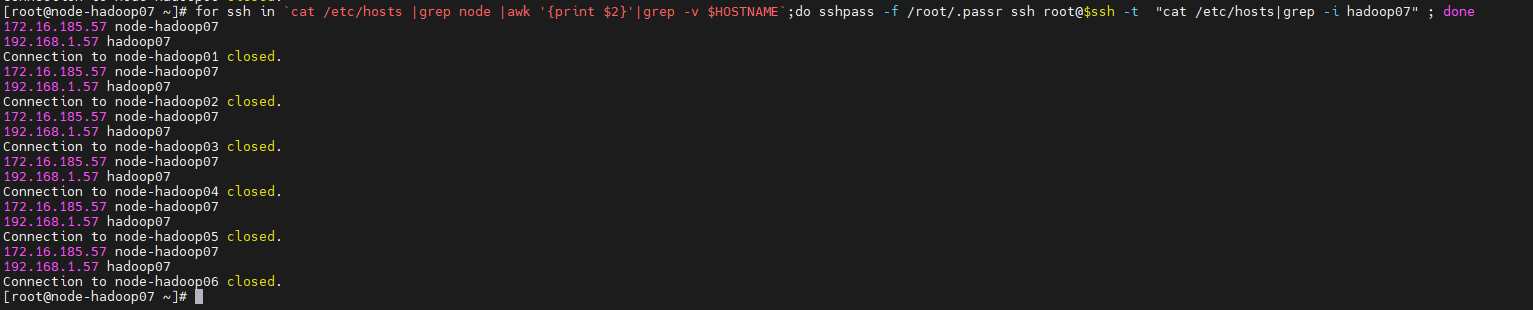

Check déploiement « hosts » sur les nodes du Cluster Hadoop

[root@node-hadoop07 ~]# for ssh in `cat /etc/hosts |grep node |awk '{print $2}'|grep -v $HOSTNAME`;do sshpass -f /root/.passr ssh root@$ssh -t "cat /etc/hosts|grep -i hadoop07" ; done

Ajout Certificat hduser

Copie Fichier « authorized_keys » Certificat /etc/hosts de NameNode

[root@node-hadoop07 ~]# su – hduser [hduser@node-hadoop07 ~]$ sshpass -f /home/hduser/.hduser scp root@node-hadoop01:/home/hduser/.ssh/authorized_keys /home/hduser/.ssh/authorized_keys

Ajout Keyfs node07 sur « authorized_keys »

[hduser@node-hadoop07 ~]$ cat /home/hduser/.ssh/id_rsa.pub >> /home/hduser/.ssh/authorized_keys

Déploiement du fichier « authorized_keys » sur les nodes du Cluster Hadoop

[hduser@node-hadoop07 ~]$ for ssh in `cat /etc/hosts |grep node |awk '{print $2}'|grep -v $HOSTNAME`;do sshpass -f /home/hduser/.hduser scp -o StrictHostKeyChecking=no /home/hduser/.ssh/authorized_keys hduser@${ssh}:/home/hduser/.ssh/authorized_keys ; done

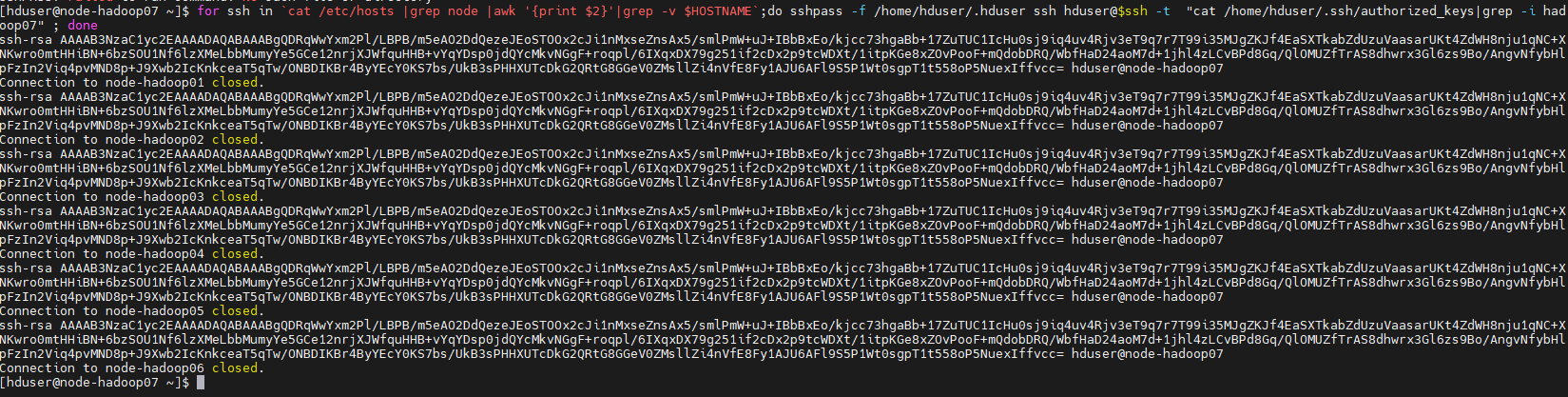

Check déploiement « authorized_keys » sur les nodes du Cluster Hadoop

[hduser@node-hadoop07 ~]$ for ssh in `cat /etc/hosts |grep node |awk '{print $2}'|grep -v $HOSTNAME`;do sshpass -f /home/hduser/.hduser ssh hduser@$ssh -t "cat /home/hduser/.ssh/authorized_keys|grep -i hadoop07" ; done

Récupérons les paquets nécessaires (NodeName)

[hduser@node-hadoop07 ~]$ scp hduser@node-hadoop01:/home/hduser/jdk-8u202-linux-x64.tar.gz . [hduser@node-hadoop07 ~]$ scp hduser@node-hadoop01:/home/hduser/hadoop-3.3.5.tar.gz .

Installation des paquets (tous les nœuds)

Paquet JDK java

Installation JDK

[hduser@node-hadoop07 ~]$ su [root@node-hadoop07 hduser]# cd /home/hduser [root@node-hadoop07 hduser]# tar -xvzf jdk-8u202-linux-x64.tar.gz -C /opt

Paquet hadoop

Installation hadoop

[root@node-hadoop07 hduser]# tar -xzvf hadoop-3.3.5.tar.gz -C /opt/ [root@node-hadoop07 hduser]# mv /opt/hadoop-3.3.5/ /opt/hadoop/ [root@node-hadoop07 hduser]# chown -R hduser:hadoop /opt/hadoop/

Ajout Variable d’environnement (hduser)

[root@node-hadoop07 hduser]# su - hduser

[hduser@node-hadoop07 ~]$ vi ~/.bashrc

export PATH=${PATH}:/opt/jdk1.8.0_202/bin #export JAVA_HOME=/opt/jdk1.8.0_202 #export PATH=$JAVA_HOME/bin:$PATH export JAVA_HOME=$(readlink -f /opt/jdk1.8.0_202/bin/java | sed "s:/bin/java::") export HADOOP_HOME=/opt/hadoop export HADOOP_INSTALL=$HADOOP_HOME export YARN_HOME=$HADOOP_HOME export PATH=$PATH:$HADOOP_INSTALL/bin export HADOOP_COMMON_LIB_NATIVE_DIR=$HADOOP_HOME/lib/native #export HADOOP_OPTS="-Djava.library.path=$HADOOP_HOME/lib" export HADOOP_OPTS="-Djava.library.path=$HADOOP_HOME/lib/native" export PATH=/opt/jdk1.8.0_202/bin:$PATH

[hduser@node-hadoop07 hduser]# source ~/.bashrc

Ajout logs hadoop

[hduser@node-hadoop0x ~]$ mkdir /opt/hadoop/logs [hduser@node-hadoop01 ~]$ chown -R hduser.hadoop /opt/hadoop/logs

Configuration Hadoop

Set JAVAHOME

Import Fichier conf mapred-env.sh (master)

[hduser@node-hadoop07 ~]$ scp hduser@node-hadoop01:/opt/hadoop/etc/hadoop/mapred-env.sh /opt/hadoop/etc/hadoop/mapred-env.sh

Configuration Hadoop site

Import Fichier core-site.xml (master)

[hduser@node-hadoop07 ~]$ scp hduser@node-hadoop01:/opt/hadoop/etc/hadoop/core-site.xml /opt/hadoop/etc/hadoop/core-site.xml

Import Fichier yarn-site.xml(master)

[hduser@node-hadoop07 ~]$ scp hduser@node-hadoop01:/opt/hadoop/etc/hadoop/yarn-site.xml /opt/hadoop/etc/hadoop/yarn-site.xml

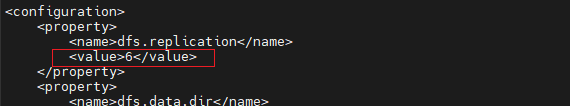

Configuration HDFS (File distribué mode block)

Import Fichier hdfs-site.xml (master)

[hduser@node-hadoop07 ~]$ scp hduser@node-hadoop01:/opt/hadoop/etc/hadoop/hdfs-site.xml /opt/hadoop/etc/hadoop/hdfs-site.xml

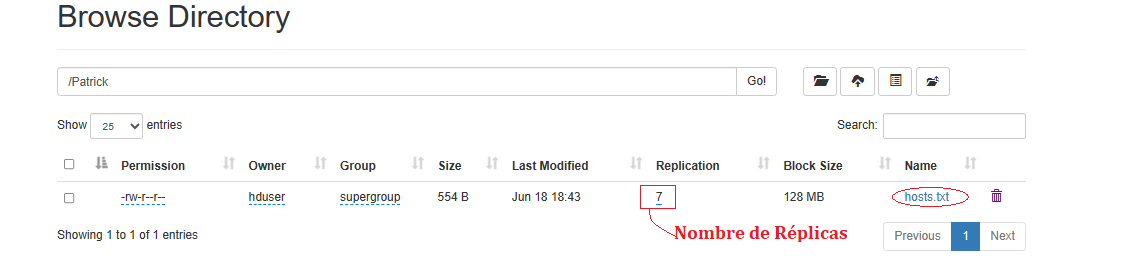

Modification Réplicas Fichier hdfs-site.xml (master)

[hduser@node-hadoop07 ~]$ cat /opt/hadoop/etc/hadoop/hdfs-site.xml

[hduser@node-hadoop07 ~]$ sed -i 's/<value>6<\/value>/<value>7<\/value>/g' /opt/hadoop/etc/hadoop/hdfs-site.xml

Déploiement du fichier « hdfs-site.xml» sur les nodes

[hduser@node-hadoop07 ~]$ for ssh in `cat /etc/hosts |grep node |awk '{print $2}'|grep -v $HOSTNAME`;do sshpass -f /home/hduser/.hduser scp -o StrictHostKeyChecking=no /opt/hadoop/etc/hadoop/hdfs-site.xml hduser@${ssh}:/opt/hadoop/etc/hadoop/hdfs-site.xml ; done

Check déploiement « hdfs-site.xml» sur les nodes

[hduser@node-hadoop07 ~]$ for ssh in `cat /etc/hosts |grep node |awk '{print $2}'|grep -v $HOSTNAME`;do sshpass -f /home/hduser/.hduser ssh hduser@$ssh -t "cat /opt/hadoop/etc/hadoop/hdfs-site.xml |grep -i value" ; done

Configuration mapred

Import Fichier mapred-site.xml(Master)

[hduser@node-hadoop07 ~]$ scp hduser@node-hadoop01:/opt/hadoop/etc/hadoop/mapred-site.xml /opt/hadoop/etc/hadoop/mapred-site.xml

Configuration fichier « slaves »

Import Fichier « worker » de NodeName

[hduser@node-hadoop07 ~]$ scp hduser@node-hadoop01:/opt/hadoop/etc/hadoop/workers /opt/hadoop/etc/hadoop/workers

Ajout node07 dans « worker »

[hduser@node-hadoop07 ~]$ echo "192.168.1.57" >> /opt/hadoop/etc/hadoop/workers

Déploiement nouveau fichier « worker » sur le NodeMaster

[hduser@node-hadoop07 ~]$ sshpass -f /home/hduser/.hduser scp /opt/hadoop/etc/hadoop/workers hduser@node-hadoop01:/opt/hadoop/etc/hadoop/workers

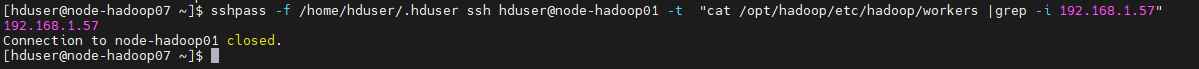

Check Déploiement « worker » sur le NodeMaster

[hduser@node-hadoop07 ~]$ sshpass -f /home/hduser/.hduser ssh hduser@ node-hadoop01 -t "cat /opt/hadoop/etc/hadoop/workers |grep -i 192.168.1.57"

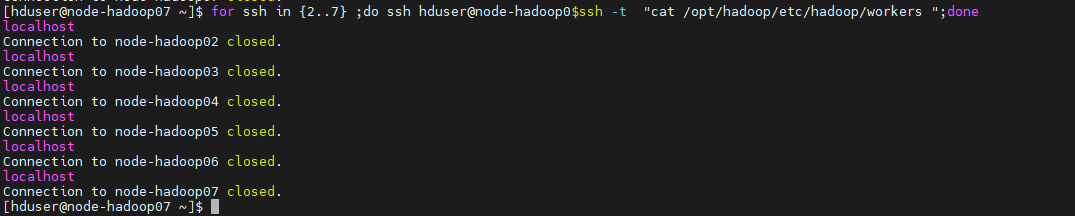

Fichiers slaves (DataNodes)

[hduser@node-hadoop07 ~]$ echo "localhost" > /opt/hadoop/etc/hadoop/workers [hduser@node-hadoop07 ~]$ cat /opt/hadoop/etc/hadoop/workers localhost

[hduser@node-hadoop07 ~]$ for ssh in {2..7} ;do ssh hduser@node-hadoop0$ssh -t "cat /opt/hadoop/etc/hadoop/workers ";done

Stopper le firewalld

[root@node-hadoop07 ~]# systemctl stop firewalld

Stop le Cluster Hadoop (master)

[hduser@node-hadoop07 ~]$ ssh hduser@node-hadoop01 -t "/opt/hadoop/sbin/stop-all.sh"

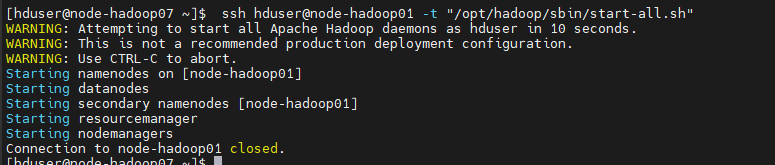

Démarrer le Cluster Hadoop (master)

[hduser@node-hadoop07 ~]$ ssh hduser@node-hadoop01 -t "/opt/hadoop/sbin/start-all.sh"

Statuts des services du Cluster

Statuts node-hadoop07

[hduser@node-hadoop07 ~]$ jps

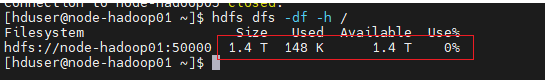

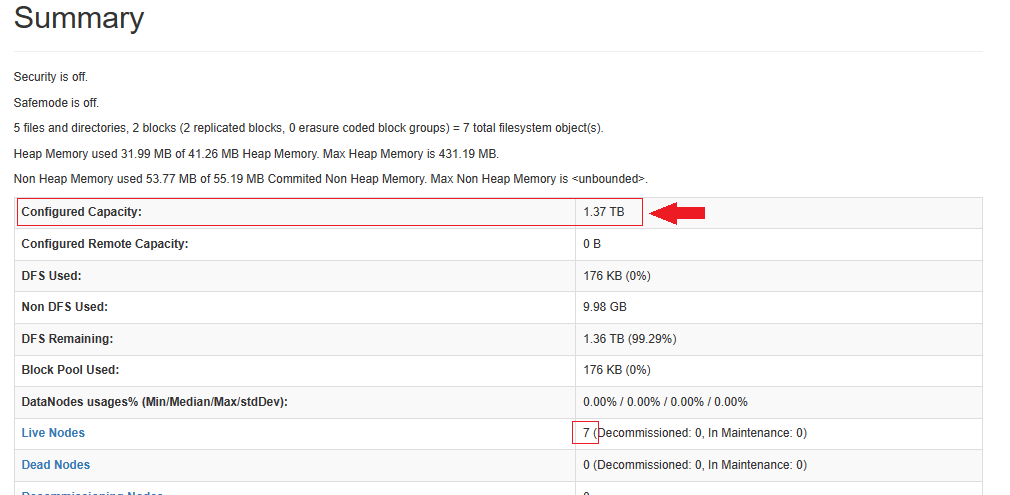

Nouvelle Taille HDFS

Sur le NameNode

[hduser@node-hadoop01 ~]$ hdfs dfs -df -h /

La taille du Pool est bien augmentée de 200Go

Sur les DataNode

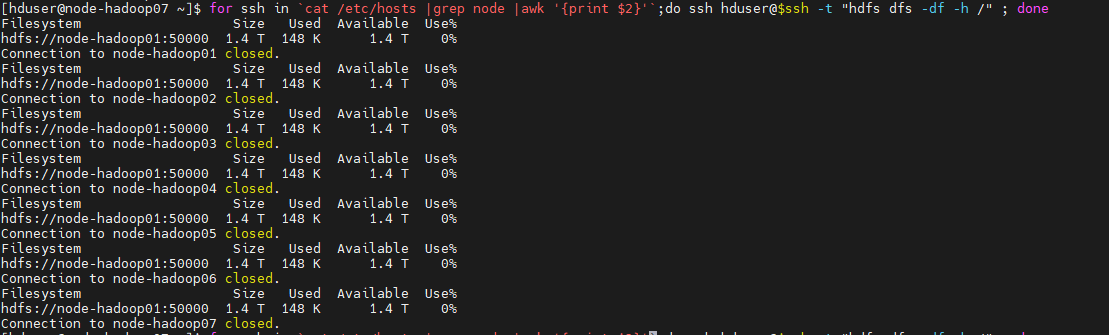

[hduser@node-hadoop07 ~]$ for ssh in `cat /etc/hosts |grep node |awk '{print $2}'`;do ssh hduser@$ssh -t "hdfs dfs -df -h /" ; done

Statuts HDFS

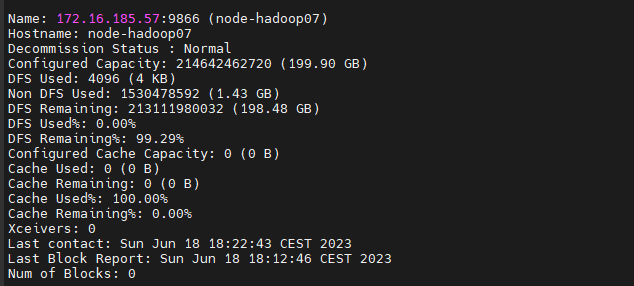

[hduser@node-hadoop07 ~]$ hdfs dfsadmin -report

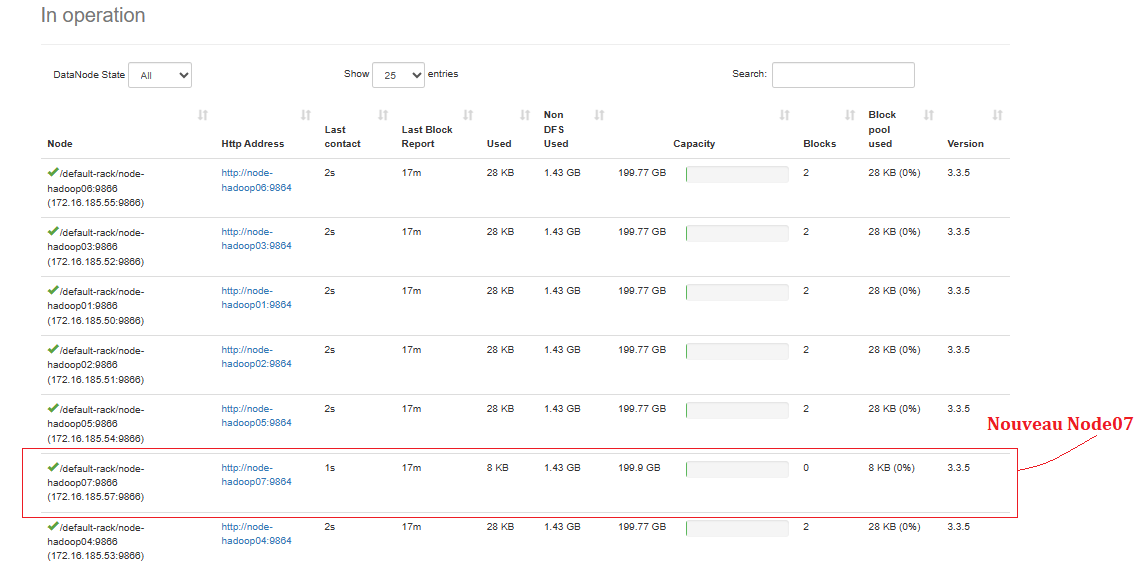

Le nouveau node-hadoop07 est bien intégré dans le Cluster Hadoop

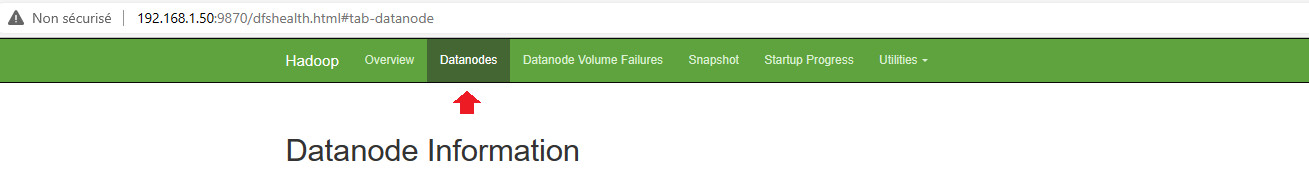

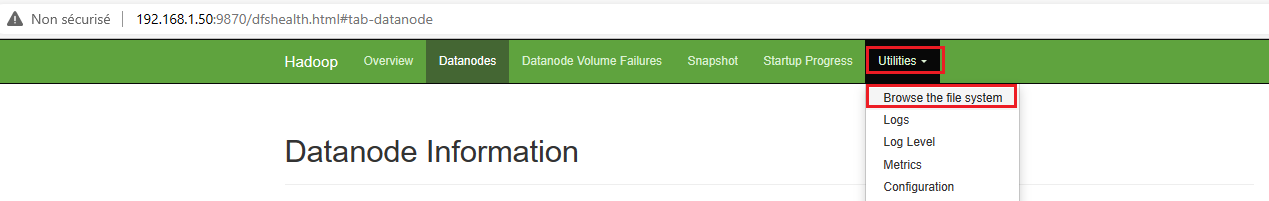

Vue de l’interface Web UI (Ressource Manager )

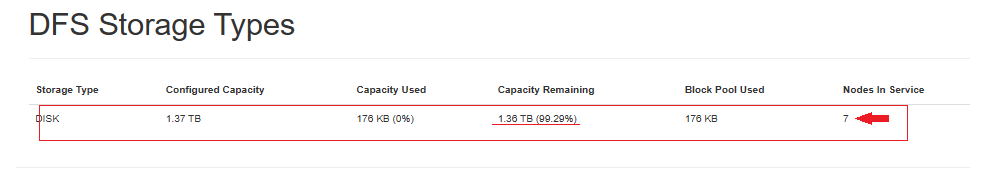

Vue Pool HDFS

Pool DFS de 7 nodes Hadoop

Vue des nodes HDFS (datanodes)

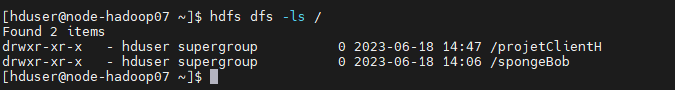

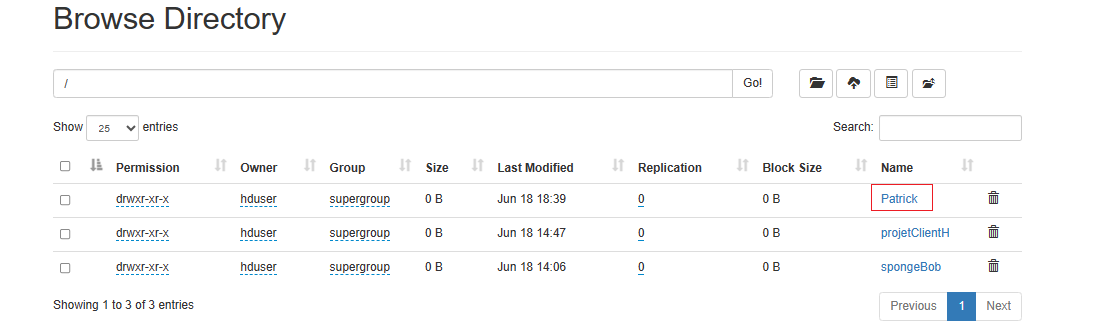

Vue des fichiers déjà présents sur le Cluster via node07

[hduser@node-hadoop07 ~]$ hdfs dfs -ls /

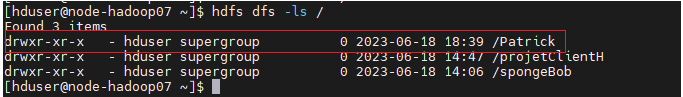

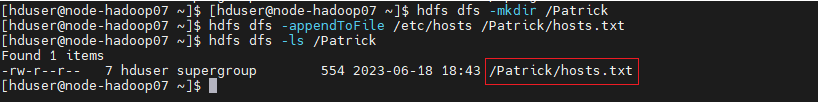

[hduser@node-hadoop07 ~]$ hdfs dfs -mkdir /Patrick [hduser@node-hadoop07 ~]$ hdfs dfs -ls /

[hduser@node-hadoop07 ~]$ hdfs dfs -appendToFile /etc/hosts /Patrick/hosts.txt [hduser@node-hadoop07 ~]$ hdfs dfs -ls /Patrick

Vue de la réplication (Répertoire/Files)

Views: 0